Looking back at the first HDTVs, they weren’t that special. Sure, they looked better than old standard-definition models, but compared to today’s TVs, they weren’t anywhere near as sharp. Why? The difference is that most new TVs today are 1080p/120 or better.

The “1080” part

The highest resolution available for HDTV today is 1920×1080. However, it’s only been in the last four years or so that TVs that showed 1920×1080 were affordable. Older HDTVs used display panels that were typically 1366×768. They could show programming that was made at 1920×1080, but they just converted it down. Believe it or not, a 1920×1080 signal has double the picture resolution of a 1366×768 picture… if you were converting from one to the other you were throwing out half the picture! If you don’t believe me, do the math:

1366×768 = 1,049,088 pixels.

1920×1080 = 2,073,600 pixels.

See what I mean?

This wasn’t really a problem for 32″ TVs and smaller, because if you’re halfway across the room you couldn’t see that level of detail anyway. But, as TVs got bigger, pictures got blurrier and 1920×1080 became a necessity.

The “p” part

Did you ever wonder what the “p” in 1080p stood for? It’s progressive and that means that every line is drawn from top to bottom every time. That’s common sense, right? In the early days of television, it just wasn’t possible to do that. So, to get the quality they needed, televisions interlaced their pictures. Interlacing means drawing every other line, then starting again and drawing the lines you missed. Put it this way:

| Progressive |

| 1………………. |

| 2………………. |

| 3………………. |

| 4………………. |

| 5………………. |

| 6………………. |

| Interlaced | |

| 1………………. | |

| 2………………. | |

| 3………………. | |

| 4………………. | |

| 5………………. | |

| (back to top) | 6………………. |

TVs that interlace look flickery and don’t show as much information in each frame. So, progressive is important. Luckily progressive is really easy to do with flat panels, a lot easier than it was with old tube TVs.

…and the “120” part?

The 120 refers to the refresh rate. It’s measured in hertz, but that just means “number of times per second.” A TV that’s 1080p/120 can put 120 images up on the screen every second. That helps somewhat with flickering and eyestrain, but the real reason is to match up film and video.

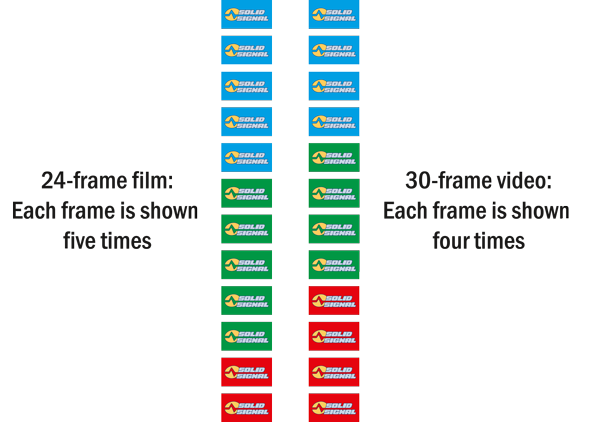

For most of its history, movies have been shot at roughly 24 frames per second. However, in the United States, standard-definition video has always been shot at 30 full frames per second (actually, 60 interlaced frames per second.) HD video can be shot at 24, 30, or 60 frames per second.

Why? When television was invented, it wasn’t possible to use 24 frames per second with the 60-cycles-per-second electrical system. And, converting film cameras to use 30 frames of film per second would have cost more in film. So there you go.

Unfortunately, when you convert 24-frame film to 30-frame video you lose some quality. We’ve grown accustomed to this and it’s part of the “film look” that makes us feel like we’re watching a quality TV show. However, it’s also nice to see a film as it was shown in the theater. Isn’t that one of the reasons you got a big TV? So, you need a TV that can show 24-frame, 30-frame and 60-frame video without any loss of quality. You need a TV that can show 120 frames in one second. Any less and something is lost in translation.

You can see it in this diagram. As the TV goes along showing 120 frames every second, it’s able to show the same frame of film 5 times, or the same frame of video 4 times without any problem.

What about higher resolutions and frame rates?

Higher resolutions aren’t really an issue… yet. Right now there isn’t any content that is more detailed than 1920×1080. Higher resolution content is coming, but it’s not here yet. As for higher refresh rates, you can get TVs with 240, 480, and even 600 frame-per-second refresh rates. They make action even smoother, but the big jump in quality starts with 120. Anything else is just gravy.