This can be an incredibly difficult subject to write about. I tend to get the same question over and over again, and even though I answer it the same way, it seems like there’s always a new loophole.

This time it came from a customer who asked:

Can I use a line amplifier on one run from my DIRECTV SWM to go 300 feet, as long as I disable whole-home sharing?

Let’s get into the details: How long of a run can you get?

I’ve written really long tutorials on cable length, but maybe you just want a quick and easy set of numbers. So, here’s the 5-minute guide:

First, look at your splitters. You’ll lose 3dB for every 1×2 splitter, 7dB for every 1×4, and 14dB for every 1×8. These are generic numbers but they’re pretty good.

At antenna frequencies, a decent cable will lose about 3dB per 100 feet. At satellite frequencies it’s more like 4dB per 100 feet.

So, add up the losses from your splitters and cable runs. Say you’re running an antenna system with a 1×4 splitter and the longest total run is 300 feet (from antenna to TV.) That’s 7dB for the splitter and 9dB for the cable… 16dB. You need an amplifier that gives you at least 16dB boost to compensate. If you’re running a 14dB amplifier, that’s not enough.

Here’s another example: Say you’re using DISH, or DIRECTV non-SWM. So the total run from the dish to the receivers is 400 feet. You’ve lost 16dB of signal, too much for your average 14dB amplifier to compensate for.

But what about DIRECTV SWM?

Most of the questions we get involve DIRECTV SWM. DIRECTV SWM is the least flexible of all the cabling systems used in the US because of two factors.

SWM carries three types of signals

The SWM system is designed to provide everything you need on a single cable. This is a lot more complex for AT&T than it is for DISH or for cable TV. There are potentially six different kinds of signals coming from a DIRECTV dish, as opposed to only three from DISH and one from the cable company. The hardware required to put the signal you want on one cable, while providing up to 13 locations with the signals they want, makes things particularly hard.

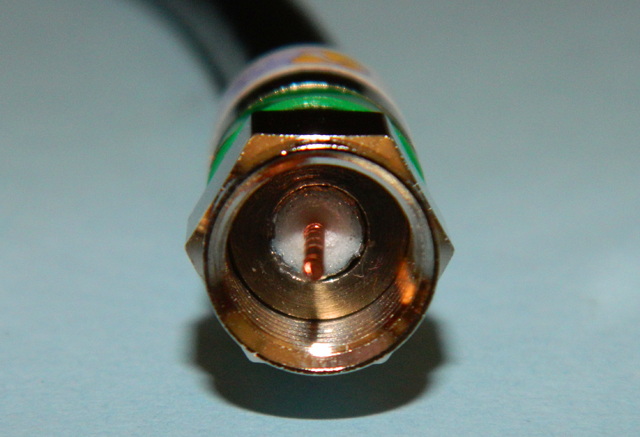

In addition to the satellite signal itself, the same cable also carries two other types of signals. The connected-home, or whole-home, signal carries network information between receivers, allowing devices like Genie clients to function. There’s also a return path, a low-frequency signal that allows receivers to request the kind of signal they want from the dish.

Amplifiers are right out

DIRECTV SWM is a particularly difficult beast. It’s partially because there’s the matter of the whole-home signal the return path signal. Theoretically, it would be possible to build an amplifier that would work with all three, but it would be impossibly expensive and hardly anyone would buy it. Normal amplifiers just amplify only one of the three signals.

To answer the customer’s question, you can eliminate the whole-home signal by using band stop filters, but you won’t get far without the control signal on the return path. Not only is there no amplifier that works with it, most amplifiers designed for satellite will degrade that signal.

The bottom lin is, you can’t use amplifiers after the SWM in most cases. If you’re not able to do a lot of measuring, the best thing to do is limit SWM cable runs to under 150 feet. There is the option of using the HP version of the SWM-30, but that’s just dangerous. Solid Signal doesn’t even offer that part to regular customers because if you use it improperly it will destroy your equipment.

The right way to use an amplifier

The best way to use an amplifier for satellite is actually really the only way. You can use amplifiers to your heart’s content on the runs from the dish to the multiswitch. This is true for both DIRECTV and DISH. For DIRECTV systems, choose this amplifier from AT&T. DISH systems can use this amplifier from Sonora. If your cable run is too long for the amplifier you’re using, it’s possible to add another amplifier but remember there does come a point where the signal is just too weak to amplify. In cases like that, a second dish or antenna is your best choice.